SaaS instincts suffocate AI products

7 SaaS PM reflexes that shrink AI products—and why data people already think differently.

If you’ve spent your career close to data, you’re probably better positioned to build AI products than most product managers in Silicon Valley. I haven’t seen anyone say that yet, so let me make the case. (Yeah I know, I might have a bias here ;))

It’s not because of technical skill (I for one don’t think they are important at all). It is because of how you think.

I’ve now spent sixteen months as Head of Product for an AI system, after years in product & data — BI startup founder, data PM, Head of Marketing for a data tool. Every single SaaS instinct I brought with me was inverted. Not slightly off. Inverted. And I still hear “other products do it this way” every week from smart, reasonable people who are pointing at SaaS products and call them role models for something that isn’t SaaS anymore.

Let me walk you through why I think they’re wrong.

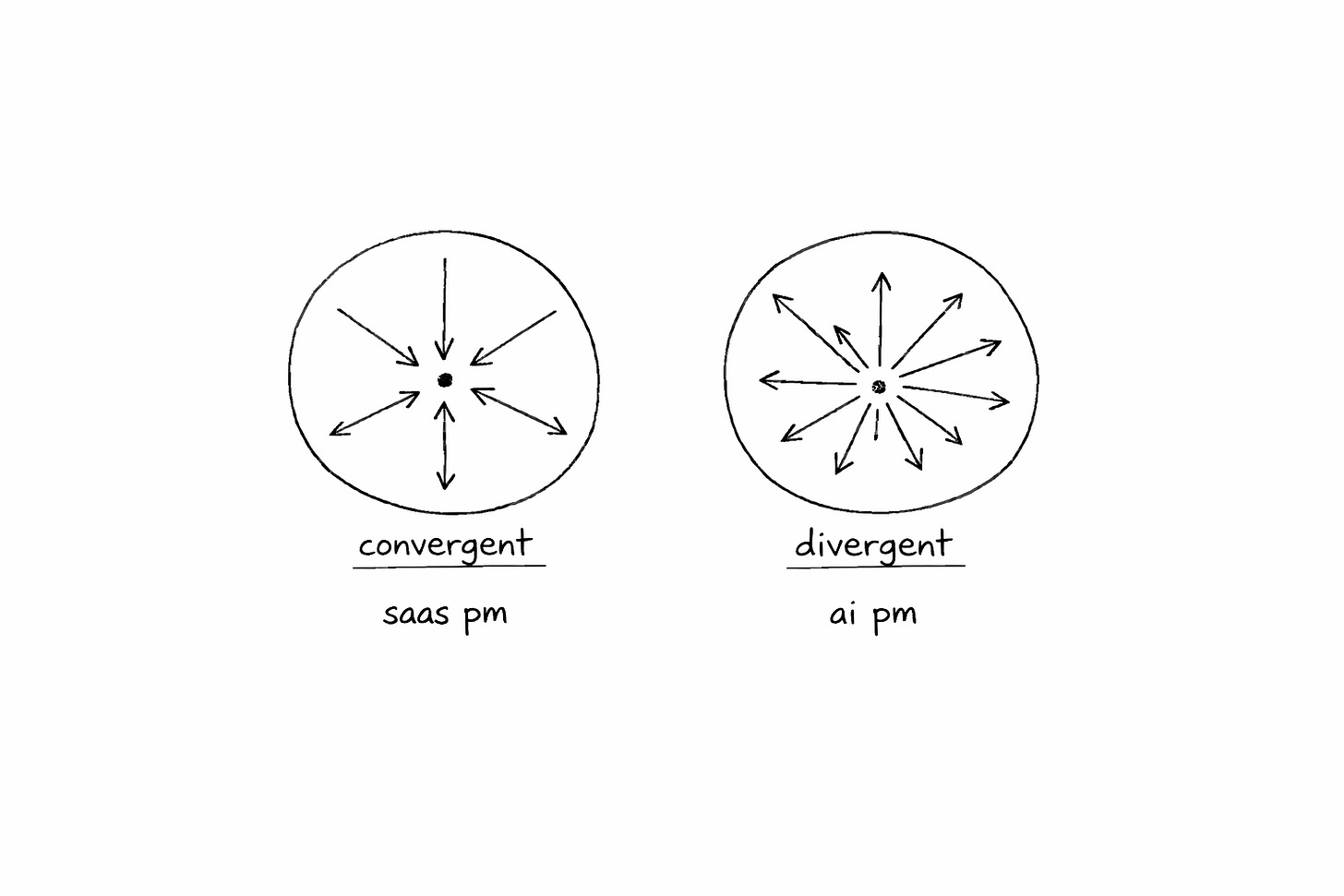

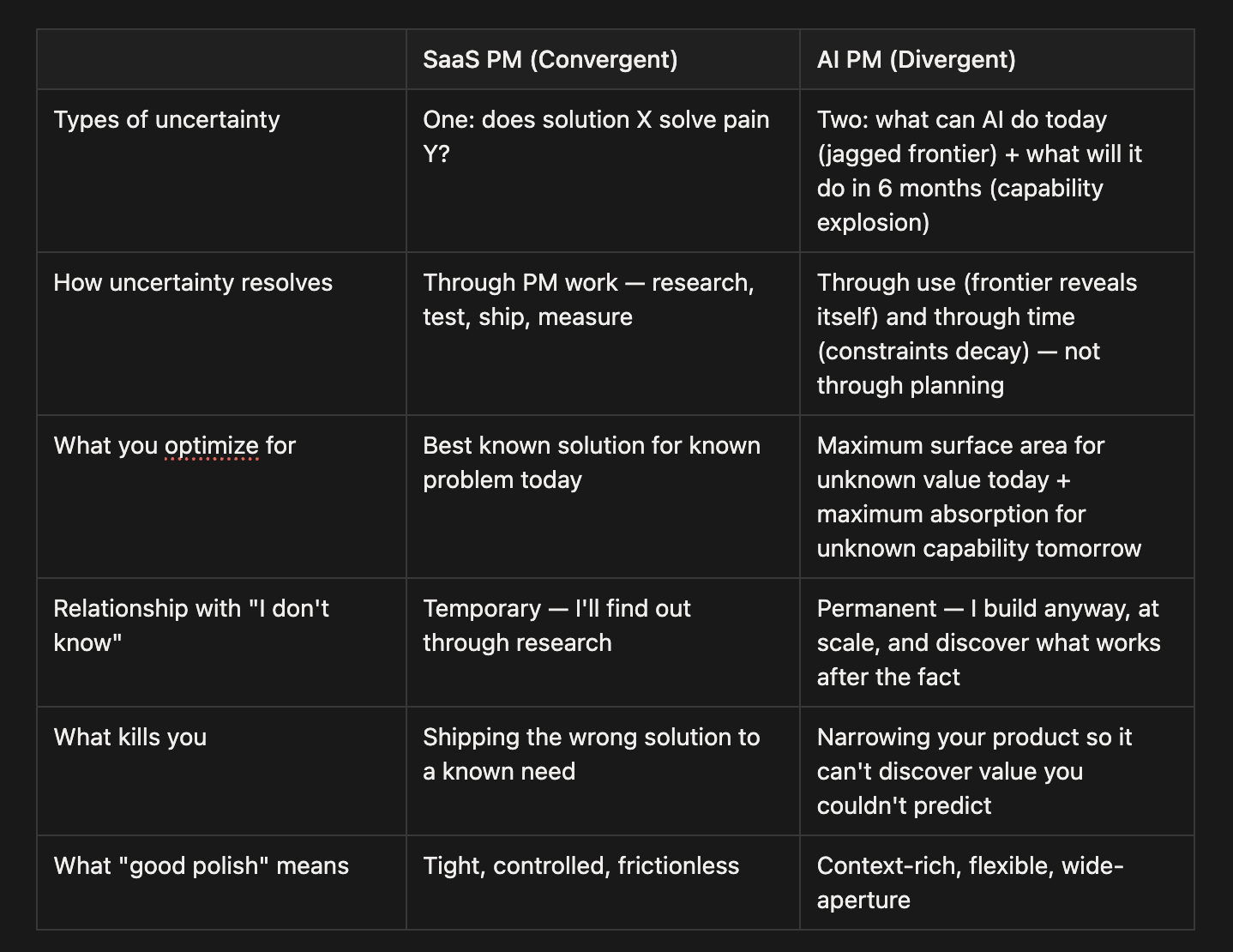

SaaS product management is a convergent discipline. You face one uncertainty — does solution X solve pain Y? — and you reduce it (and manage the risk). Research, prototype, test, ship, measure. Every sprint narrows the gap. Convergence IS the job. “I don’t know yet” is a temporary state you’re trained to exit as fast as possible.

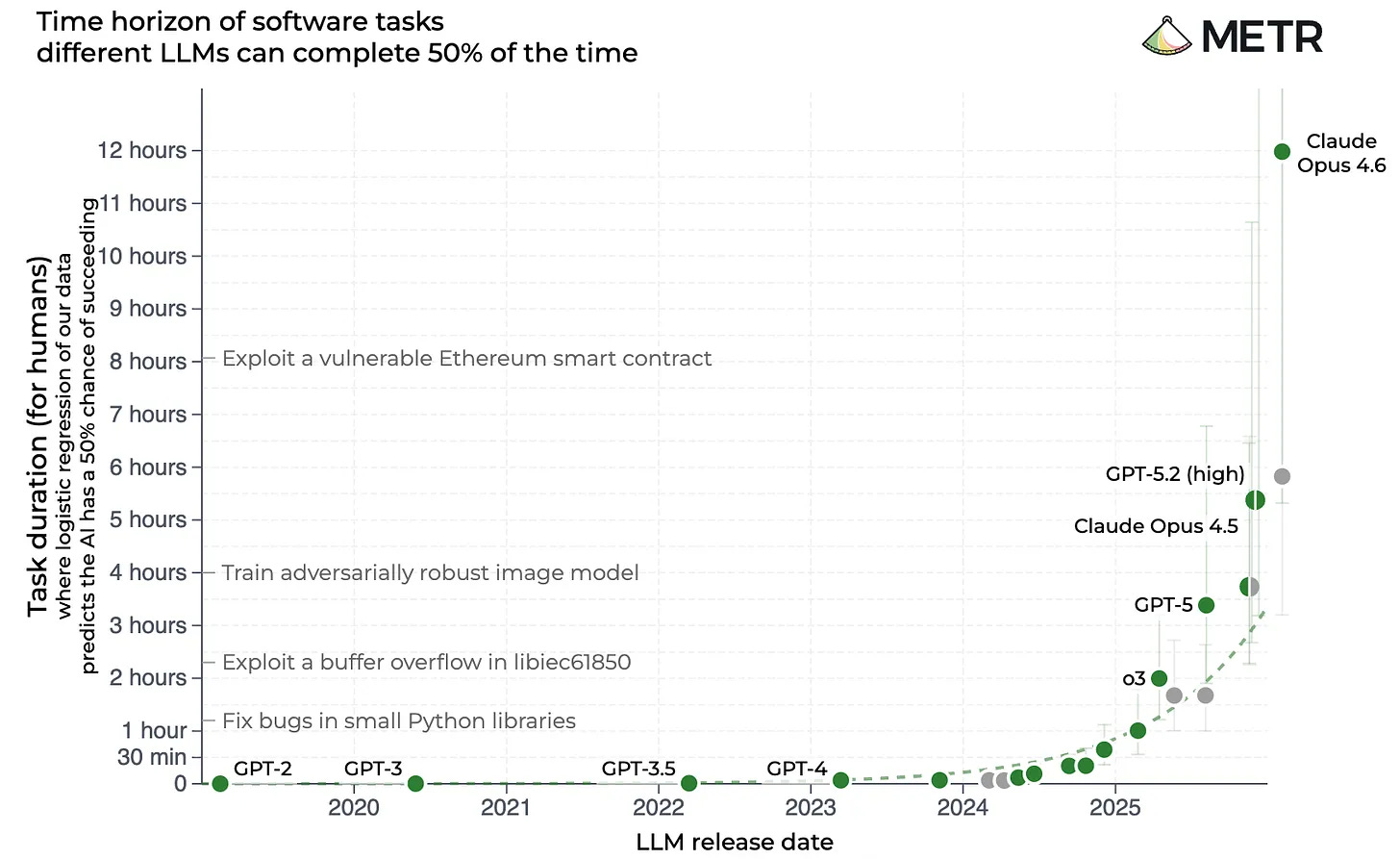

AI product management is a divergent discipline, and that turns everything on its head (at least from my experience). You face two uncertainties that no PM toolkit can resolve (correction: that NOTHING can resolve, that’s the whole point). The jagged frontier: you genuinely cannot predict which tasks AI handles brilliantly and which it botches — no research reveals this, only use at scale (and it cannot, it’s inherent in the idea of a general purpose technology). The capability explosion: what’s impossible today becomes trivial in six months. Constraints decay on a six-month half-life. I’ve bet my career on the belief that AI today is at 1% of what it will be. I mean that literally. A hundredfold better in a decade, in a hundredfold more things. I don’t see a ceiling.

In SaaS, the PM reduces uncertainty. In AI, neither type yields to PM tools. So the job inverts: from “converge on the answer” to “build a product that thrives without one.”

Core thesis: SaaS PM is convergent. AI PM is divergent. And convergent instincts in a divergent environment will kill your product.

Core belief: AI is at 1 % of what it will be. I don’t see a ceiling. Constraints decay on a six-month half-life.

Read the right column for a second. Comfort with irreducible uncertainty. Building systems where signal emerges through use, not specification. Letting reality update your model instead of forcing your model onto reality. Designing for compounding improvement over time.

Now if you’re a SaaS PM reading this: the good news is that convergent skills aren’t useless — they’re just not sufficient anymore. The transition is uncomfortable, but it’s learnable. Get comfortable with “I don’t know yet at all and will never know.” Get comfortable with betting big, and without clear paths to success.

If you’ve spent years close to data — leading data teams, founding data companies, studying how truth actually emerges from evidence — you’ve internalized the divergent mindset already. The academic discipline of letting evidence lead. The builder’s patience with signal that takes time to resolve. That comfort with “I don’t know yet, and I can’t force the answer” isn’t a weakness. It’s exactly what AI products require. You might be closer to AI-PM-ready than you think.

AI product value vs. SaaS product value is all about absorption

The entire value of an AI product is driven by two forces of uncertainty. Not by how well you reduce risk. Not by how polished your current features are. By how well you bet under uncertainty.

Key lesson: AI product value = how much AI progress you absorb + how fast you absorb it. What your product does today? That’s approximately zero in the equation. It’s the absorption term that dominates — over any 6-12 month horizon, the compounded value of what you funnel through dwarfs whatever you built last quarter.

To me (and my math brain) this looks something like this:

t = today: SaaS value = known problem + known solution + execution quality = 10 + 10 + 10 = 30.

t = 6 months: that value ≈ 0 (constraints died, capabilities doubled, your solution solves yesterday’s problem)

AI product value at ANY t = what the frontier reveals through use + what each capability doubling unlocks — and both terms compound = (something unknown)^t.

no matter where the SaaS value starts, it decays. The AI product value goes up exponentially.

In SaaS, the most valuable PM is the best risk reducer and quality builder. In AI, the most valuable PM is the smartest bettor under uncertainty. It’s a different game with a different winner.

Here’s how I think about it: your customers are outsourcing two things to you. (1) Understanding what AI can do today — the jagged frontier they can’t see. (2) predicting where AI will be in six months — the capability curve they can’t forecast. They don’t need to know the knowledge cutoff of the underlying LLM. They don’t need to know which model is best for which task. They don’t need to decide whether web search should be on or off. They’ve outsourced all of that to you. And it’s your job to get it right for at least 80% of their use cases (for each individual user).

Think about it like electricity or the internet. When you buy an appliance, you don’t think about voltage regulation. When you open a browser, you don’t manage TCP packets. That’s the job of the product in between. Same here — and no, the answer is definitely not “teach everyone prompting”.

So your product has one job: be an absorption machine for technological progress of this big fat general purpose technology. Every product decision either increases or decreases your absorption surface. The seven inversions below are the seven ways SaaS instincts shrink it.

1. Don’t ship even if they desperately want it

The SaaS instinct goes something like this: customers are asking for it, sales is hammering on your door, ship it. Customer-driven development is the gospel.

Business case - Granola: The Granola team had exactly this problem. Their AI meeting assistant could only handle 30-minute meetings because of context window limitations. Customers wanted long-meeting support — I did too. Most teams would’ve done the “responsible” thing: spend months building complex chunking algorithms and token management solutions. Instead, Granola improved every other part of their tool but this one. When bigger context windows arrived months later, they plugged in overnight. 70% weekly retention. $250M valuation.

At MAIA, we had a similar situation. Our customers kept asking for a translations feature. “Build this and we’ll ditch our DeepL subscription.” We said no. We invested in richer context instead — glossaries, domain terminology, company-specific language.

Last month, our sales rep forwarded a message I didn’t expect: “Customer says MAIA translates better than DeepL.” Third time in sixty days. We don’t build a translator. We build enterprise AI knowledge management. But enterprise translation isn’t a language problem — it’s a context problem. DeepL can hit grammar and tone. It doesn’t know your project language, your approved terminology, your supplier jargon, your abbreviations. MAIA translates better not because it’s smarter, but because it’s been onboarded into your world.

SIDE NOTE: We’re still in a weird place with AI. That means both customers and companies building AI products will have to work hard to get the elusive productivity gains that AI has (I’m a true believer, because I’ve seen it again and again across industries and users). For product builders, that means making amazing products. For customers, it means they’ll still have to basically “onboard” their AI tools for some time to come. Check out that article from me on that topic if you’re interested.

And now customers want the next thing: 40-page document translation, just like DeepL does. Will we build it? Nope, same principle. Output context windows will be big enough in 6-12 months that models handle this natively. And by then, our translations will be 2-3x better — not because we engineered around the page-count constraint, but because we spent those months deepening the context that makes translations actually sound like they came from inside the company.

We made the same bet with web browsing — said no, invested elsewhere, a capability expansion solved it without us building anything.

The difference is in time horizons. We should be better at forecasting capability curves than our customers. They’re outsourcing that job to us, and we should be grateful for the trust. Of course, you can only say no to features that aren’t the core thing your product does. If you’re a translation product, you translate. You can hold off on the 40-page constraint because you’re still delivering massive value on 5-page documents. The “no” only works when you can confidently say: “We’re delivering this other value to you right now, and the thing you’re asking for will be better when we get there.”

Every feature you ship to satisfy today’s demand is a feature you maintain instead of absorbing tomorrow’s capability. The hardest word in AI product management is “no” — said to a customer who is correct about their pain and wrong about the solution.

2. Friction is your AI’s food

The SaaS instinct: reduce friction everywhere. Every extra field kills conversion. Get to value in under 60 seconds.

Business case — Ramp could have done what every expense tool before them did: minimize input fields, auto-fill everything, get the receipt submitted in three taps. Instead, their AI agents are trained on patterns from 50,000+ customers, and they work precisely because users provide rich expense context, policy documents, historical patterns. In October 2025 alone, Ramp’s AI made 26.1 million automated decisions across $10 billion in spend. The richness of the input IS the intelligence. Strip that away in the name of friction reduction and you have a dumb calculator with a $32 billion valuation’s worth of potential left on the table.

At MAIA, we turned on mandatory interrogation-style onboarding that refuses to accept “Product Manager” as a sufficient description of what you do. The sales team hammered on our door (”are you sure? We should definitely turn this off for customer XYZ”). Six months later? The only thing we hear is praise. “How does MAIA know she should adopt the answer for an industrial marketer working in Asian markets?” is the only question we get now.

How do you actually pull this off with thousands of existing users? Two things. First, you tell them why — “we need this to give you dramatically better results.” Second, you show them why — immediately demonstrate the quality difference. And of course you make the friction itself as seamless as possible. We use AI-assisted onboarding that makes the five minutes feel like a conversation, not a form. The friction isn’t in the UX. The friction is in the information — and that information is your AI’s food.

SIDE NOTE: In data, you have GIGO: garbage in means garbage out. Most SaaS PMs have spent theirs believing that less in means faster out. Those are opposite instincts.

Five minutes of input friction creates compound value across every future interaction. The shortcut you remove isn’t a step you skip — it’s signal you’ll never get back.

3. Every option you add makes your AI dumber

The SaaS instinct: give users control. More options, more configuration, more “empowerment.” Let them choose.

Business case - Cursor, the AI coding tool that hit $2 billion in annualized revenue faster than any B2B product in history, made one of its smartest moves when it temporarily removed model selection entirely from its interface. Users could no longer pick between Claude, GPT-4, or Gemini. The backlash was brief. When Cursor restored the option with “Auto” as the default, developers stopped caring. Each manual model selection had been a micro-decision that pulled developers out of flow state. By automating routing, Cursor let the AI do what it’s better at — matching the right model to the right task — and freed users to focus on their actual work.

At MAIA, we had the same pattern. Users could pick GPT-4 vs. Claude vs. Gemini. They picked the optimal model 15% of the time. We removed the choice. Auto-selection. Optimal model usage jumped to 95%. Three persistent customer problems vanished overnight. Not because the AI got smarter. Because we stopped letting users make it dumber.

And you know what? Just like for cursor this means, users automatically get the best model when there’s an upgrade in the back. You’re handing the best tech to them and roll it out to 100% of your users right away. Every single option cuts pieces of those 100%.

Here’s the dynamic most people miss: your users have outsourced the prediction to you. That’s what they’re paying for. When you give them an option to override the AI’s judgment, you’re telling them one of two things — either you don’t trust your own system, or you were too lazy to build the smart logic that makes the right call automatically. Neither is a good look. And both make your product worse.

And honestly, it also makes you lazy. Every toggle you add is a solution you didn’t build.

Instead of figuring out how to make the AI smart enough to make the right call, you punted the decision to users who know less about AI capabilities than you do.

Every decision you force on users is a decision the AI can’t make autonomously. The products users call “smartest” are the ones that ask the fewest questions about how to operate.

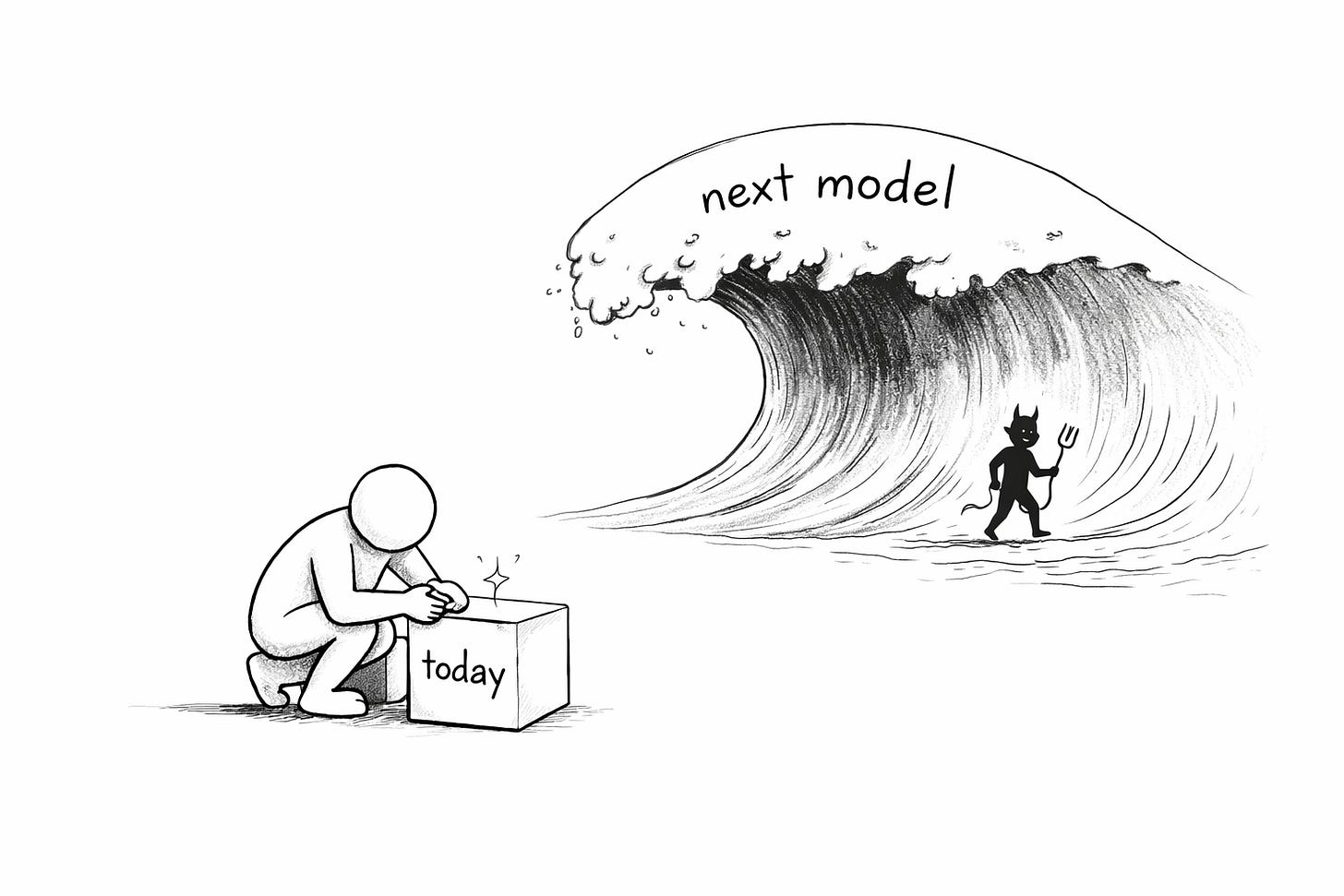

4. Ship for the model that’s coming, not the one you have

The SaaS instinct: ship the best product possible today. Optimize for current constraints. Users need solutions now, not promises.

Business Case - Replit launched Ghostwriter in October 2022 as basic autocomplete — four features at $10 a month. The product was, frankly, mediocre. But the team made a critical architectural choice: they built a modular “society of models” where any component could be swapped independently. They didn’t engineer complex workarounds for reasoning limitations or context window constraints. They accepted those constraints and built clean primitives that were ready for the next generation.

When Claude 3.5 Sonnet dropped, Replit Agent could suddenly plan, code, test, and deploy entire applications from natural language — not because Replit built those capabilities, but because their architecture absorbed them. AI-generated apps grew from 50,000 in 2023 to 1.5 million in 2024 to 5 million in 2025. Revenue went from $16 million to $253 million. That’s 1,556% year-over-year growth from an architecture decision, not a feature launch.

This is the same bet Granola made with meeting length — accept the constraint, build for absorption, win when the constraint dies.

At MAIA, when Claude 3.7 integrated into our system, 90% of our prompting challenges disappeared overnight without us building anything. The best engineering hours we spent were the ones where we built nothing — we just made sure nothing blocked the next model from working.

SIDE NOTE: this is the Constraint Decay Law from my RAG article applied to product decisions instead of engineering decisions. Same law, different victim. In engineering it kills your architecture. In product it kills your roadmap.

How do you actually do this? The Granola trick is the template: YES you get meeting transcripts, but only up to 30 minutes. YES you get translations, but only for 5 pages at a time. You deliver genuine value within the current constraint, you make the constraint visible and graceful rather than hidden and hacky, and you build your architecture so that when the constraint dies in six months, you absorb the improvement without touching a line of code.

The team that ships the “worst” product today ships the best product in six months. Every engineering hour spent working around a constraint that dies in six months is negative value — not zero, negative.

5. Every approval gate you add is a lesson your AI never learns

The SaaS instinct: keep humans in the loop. Responsible AI. Risk mitigation. Never let the machine decide without oversight.

Sierra AI built the clearest proof of what happens when you replace approval gates with intelligent autonomy. Their architecture chains a reasoning agent with a supervisor agent — if each is independently 90% accurate, chaining them yields 99% effectiveness. No human in the loop for routine decisions. The result: customers see 70-90% autonomous case resolution with quality scores around 4.5 out of 5. Sierra hit $100 million in ARR in 21 months.

The distinction isn’t “no guardrails” vs. “all the guardrails.” It’s teach vs. cage. Give the AI context, principles, and goals — not restrictions and approval gates.

At MAIA, our Insight Hub automatically collects learnings from every interaction and shares it with the whole team. Yes, that’s scary. My engineers weren’t happy about it the autonomous part. We had a heated discussion, everyone wanted to add deletion options, opt-ins, switches and everything that stops the system from learning automatically. But in the end, even the sales team caved and realized that we have to make it autonomous if we want it to have any impact at all.

Our engineering team did an amazing job and added four levels of security. The insight collection happens because that’s how the product learns. Every time a human gate prevents the AI from acting, a learning opportunity dies. The AI doesn’t just fail to complete the task — it fails to get smarter from completing it. Multiply that across thousands of interactions and you’ve built a system that’s structurally prevented from improving.

“Responsible AI” that prevents AI from acting produces less responsible outcomes than teaching AI to act well and showing its work.

6. Make it trustworthy, not magical

The SaaS instinct: make it magic. Hide the complexity. The best UX is invisible. “It should just work.”

AI is not plumbing. AI is a collaborator. And trust in a collaborator doesn’t come from hiding how they work — it comes from seeing enough to calibrate. That might be showing reasoning chains. It might be a loading signal that communicates “searching 400 pages right now.” It might be the AI flagging what it can’t do. It might be sound, imagery, animation — whatever signal the user needs to calibrate their trust. The mechanism varies. The principle doesn’t: users need to calibrate trust, and magic prevents calibration.

SIDE NOTE: There’s a saying in the cyber sec space that “feeling safe and being safe” are completely separate things in humans. You can have either without the other. Perfect example? The little green lock next to an URL. Do you know what SSL really is? ⇒ Think about trusting autonomous systems the same way.

Harvey AI, the $11 billion legal AI platform, developed its own Source Score metric. Their standard: an answer is not complete unless it accurately refers to source material through inline citations. When they integrated newer models with “capability awareness,” the AI started flagging what it can’t do — actively telling users where its reasoning is uncertain. Showing limitations builds more trust than hiding the gaps. Lawyers grant Harvey autonomy precisely because they can see its reasoning and verify its sources.

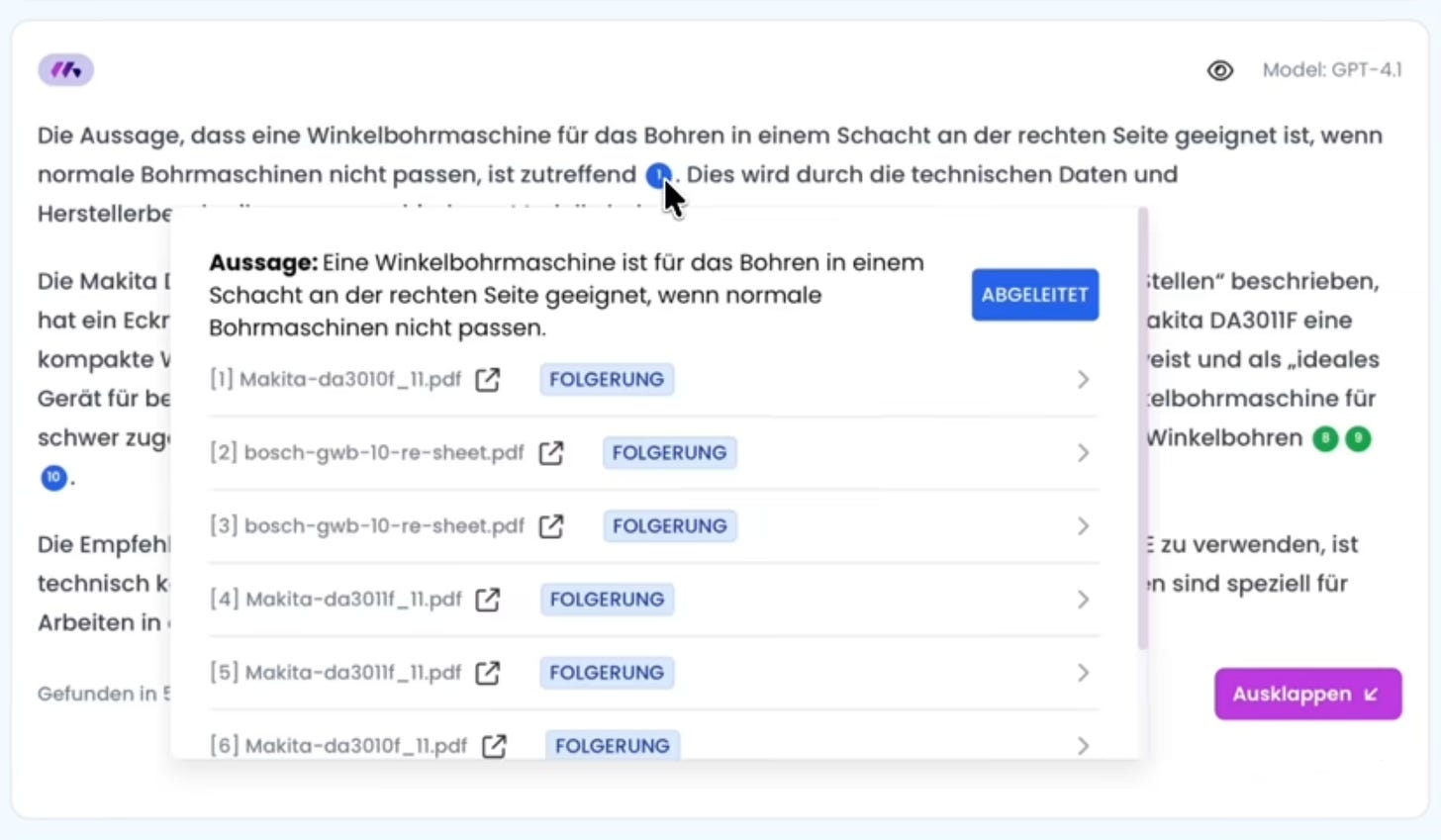

At MAIA, we have two killer features. One of them is the High Precision Mode. It does something simple: It generates a full response to a question, then extracts all facts and assumptions made in the answer (usually 20-50) and then verifies them again using all source material available (and other knowledge sources we connect to). As you might imagine, this takes 50% longer than simply generating a response.

So previously, we had the option to turn this on when users wanted it (10% did so), now we simply have it on by default, always (100%). Turns out, noone cares about 50% additional waiting time every single query, but everyone cares about getting well grounded responses! (And no, we do it different than others. We check whether we think the fact is a straight fact - green below - or whether it is a conclusion we drew from existing facts. A derivative truth).

The black-box product wins demos. The trustworthy product wins renewals. Every demo you lose on polish, you win back tenfold in retention.

7. Your AI is only as smart as its worst dimension, not its best

Each of the six unlearnings above targets a different dimension of how your AI product contacts reality — how much users tell it, how much it can do on its own, how fast it learns, whether it’s built for tomorrow, whether users can calibrate trust, whether you ship for real demand or predicted demand.

Core thesis: these dimensions don’t add — they multiply. A zero in any one collapses the product of all others.

You can be world-class at five and still have a dead product. Your brilliant transparency means nothing if the AI can’t act on what it knows. Your rich input means nothing if the system doesn’t learn from it. “Being great at one thing” doesn’t work in AI the way it works in SaaS.

Here’s why this is life-or-death, not theoretical: your product’s value distributes across your user base, across each customer, across each user’s use cases. Turn off any dimension for any slice, and that slice gets zero value from your product over the medium term. Not reduced value — zero. Because it’s multiplication, not addition.

I keep having heated discussions with our engineering team about this. I say “we will NOT allow users to turn off web crawling.” They say “are you serious? We promise trust. If they really want that control, they should have it. I can easily tell you ten cases where it’s absolutely necessary.” I say “well, then we should build a good AI in front that makes that judgment call.” “Why would you piss off our users?”

The reason isn’t stubbornness. It’s multiplication. If we turn off web crawling, some fraction of use cases for each user gets zero value from our product. Not reduced. Zero. Over the next six months, that’s a dimension of their experience where we’ve delivered nothing while capabilities doubled around us.

You don’t have to piss off users. You DO have to protect every dimension. Smart defaults, sensors, resets, AI-powered judgment calls — lots of options. The one option you don’t have is letting any dimension hit zero.

This IS how data people already think. Bottleneck analysis. Pipeline thinking. The weakest link determines throughput. Chain-linked processes where a 10% improvement in the wrong step produces 0% improvement in the outcome. You’ve been doing this analysis your whole career. Now point it at the product layer.

“Other products do it this way.”

I’ll hear it again next week. The sales team will want something easy to demo. A stakeholder will ask why users can’t pick their own model. Someone will insist on human approval for a decision the AI makes better. A customer will beg for a feature that solves a constraint that dies in six months.

They’ll be right about every SaaS product they’ve ever worked on. They’ll be wrong about this one.

You know that feeling of watching a dashboard tell you something you didn’t expect, and instead of explaining it away, sitting with it until the signal resolves? That’s the muscle. You’ve been training it for years — on messy data, on pipelines that break, on stakeholders who want certainty you can’t give them yet.

The SaaS PMs will keep converging. They’ll reduce friction, add approval gates, optimize for constraints that die in six months. And they’ll feel like experts the whole time.

You don’t need to learn ML. You need to unlearn your SaaS instincts. And if you’ve spent your career close to data, you’ve already started.

The models will double again in six months. Your product either absorbs that, or your best instincts prevent it. Now you know which ones.

Stuff I suggest you read as follow up

Nicolas Bustamante, CEO at Fintool, shared a few related ideas I really liked. In his piece “Model-Market Fit” he explains nicely “The question isn’t whether to be early. It’s how early, and what you’re building while you wait.” tackling a slightly different angle. Also he uses equations, I love equations.

Ethan Mollick, professor and researcher on general purpose technologies (kind of), coined the term “jagged frontier” and has a great article describing it, based on his landmark study (the BCG one you likely have heard about all the time, it’s become the basis for a lot of AI thought leaderhip and “best practices” - see the piece I’ll publish in a few weeks on that in general.)

Chris Pedregal, founder of Granola (used as business case above), already shared a couple of related thoughts almost 2 years ago. Worth a read, still true.