Your AI brainstorm is broken

One exercise that turns chatbot lists into ML projects that actually matter.

Here’s what my data team proposed when asked for AI use cases at Unite:

Recommendation systems.

Marketing ML.

Personalization improvements.

“Interesting, let’s prioritize,…. or not.”

Here’s what we proposed after a 30-minute exercise:

Identify every company in Europe that should be on our network but isn’t.

Find businesses linked in the real world but missing from our platform.

Detect companies doing a fraction of their potential business through us — meaning they had connections we could pull onto the network.

Same team. Same afternoon. Different question.

The first list improves metrics. The second list changes economics. Management didn’t nod politely at the second list. They funded it, let me expand and designate a whole team to it.

What changed wasn’t our creativity. It was one question, asked differently, that opened up a space we’d been trained to ignore. Here’s what we did, I call it the Perfect World Session.

Perfect World Session — the 30-minute split format:

Pick X (the value-chain choke point where better knowledge changes economics, not just features)

State the rule: no feasibility talk. None. Not yet.

Ask the question: “If we knew everything about X, what would we do that’s impossible today?”

Generate as many “impossible” actions as you can. Go wild. Stay in the perfect world. 15 minutes.

Break. Walk around. Get coffee. Let the ideas settle.

Come back. For each idea, ask: what data gets us 60-80% there? What’s the smallest prototype that would prove this works?

It’s simple, and very hard, no fun. Session 1 is imagination. Session 2 is engineering. They must not happen at the same time, or you’ll end up with the list from above.

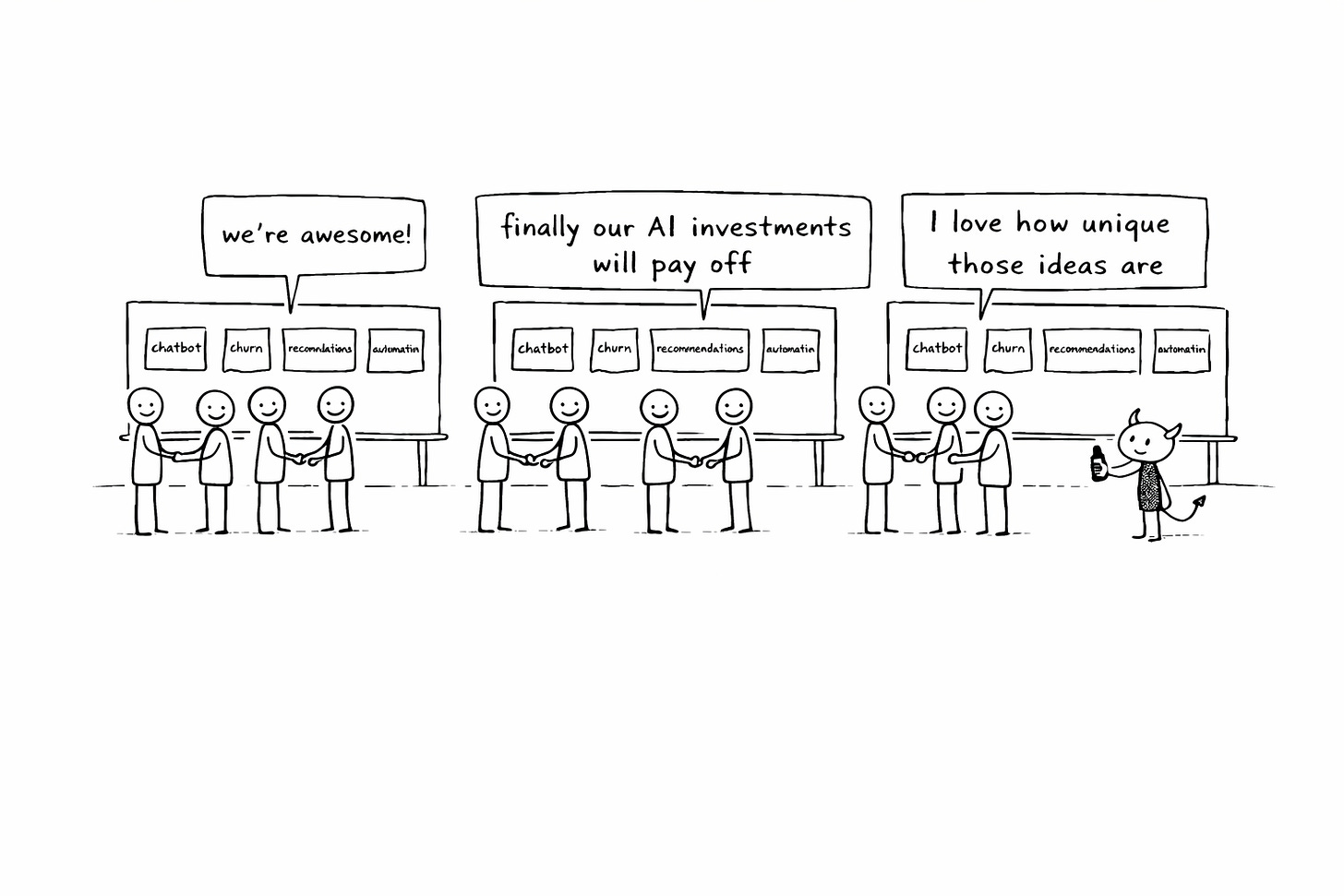

“Where could we use AI?” always produces the same list

Someone senior says “we need to find AI opportunities.” You break into groups, fill whiteboards, come back with sticky notes. Chatbots. Report automation. Recommendation engines. Churn prediction.

You know what’s on every other company’s whiteboard right now? The exact same things. I’ve talked to 50+ data leaders and the overlap is embarrassing — as if there’s a universal AI brainstorm template everyone secretly downloads from the same place.

I can save you all the hassle, just google “List of AI initiatives that will save my job and deliver nothing.”

Most AI roadmaps are automation backlogs pretending to be strategy.

Zapier CEO Wade Foster recently celebrated how Fetch shut down their entire company for a week-long AI hackathon — over 1,000 employees — and the celebrated outcome was “hundreds of automations built.” Of course it was. The CEO of an automation company told a thousand people to find ways to use AI, and they built automations. That’s the hammer-and-nails problem dressed up as corporate transformation. None of those hundreds of automations will change Fetch’s business economics. They’ll save time on tasks that already exist (though more likely, they will add complexity and create cost, rather than save time). That’s fine. That’s not strategy (thank you, Wade).

The problem isn’t creativity. The problem is the question. “Where could we use AI?” starts from the technology and scans for places it fits. You end up with chatbots. Every time. The 10x idea? Not visible. Not from this angle. Not ever.

If we knew everything about X, what would we do that’s impossible today?

Back in 2022, Unite was pivoting toward becoming a B2B network. My data team needed to prove it could do more than serve dashboards. I needed ideas good enough that management would actually invest in ML capability, not just nod politely and move on.

So after staring at our sad little list of recommendation engines, I studied success stories, failures, and good old business history. What I came up with was the Perfect World Exercise. I got a bunch of smart involved people in front of a whiteboard, started to map parts of our new business strategy and asked one question:

“If we knew everything — literally everything — about X, what would we do that’s impossible today?”

Replace X with the part of your value chain that matters most: customers, suppliers, products, transactions, competitive landscape, network graph. This is hard work — not a fun brainstorm. You need deep business and strategic understanding to choose the right X. Which means you have to understand your value chain deeply first.

How to pick X:

Good X = where better knowledge would change economics (growth, margin, retention, risk)

Bad X = where better knowledge improves a feature (nice-to-have optimization)

Test question: “If we knew everything about X, would we ship a feature… or redesign the business?”

Pick the wrong X and you get incremental ideas with perfect-world wrapping. Pick the right one and the room goes quiet in a different way.

At Unite, X was “every company in Europe.” There was this awkward pause where people looked at me like I’d asked a trick question. One person started with “well, we’d obviously improve our matching algo—” and I stopped them. No. Forget the matching algorithm. Forget our current product. If you had perfect knowledge about every business in Europe, every relationship, every transaction, every connection — what would fundamentally change in the value we deliver?

Then someone said, almost hesitantly: “We’d know which companies should be on our platform but aren’t.”

And the energy in the room shifted completely.

Not “improve recommendations for existing users” — that’s optimizing the current game. Instead: which of all the companies in Europe would be a perfect match inside our network? That’s the growth engine, not a feature improvement.

In a normal brainstorm, this idea gets killed in ten seconds flat. “We don’t have external company data, that’s not realistic, let’s move on.” But we stayed in the perfect world a few minutes longer. And more ideas dropped out, each one weirder and more interesting than the last: companies linked in the real world but not yet connected on our platform. Companies doing only a fraction of their business through us.

None of these are “apply algorithm X to business case Y” ideas. They’re hard. They require building things that don’t exist yet.

Then we took a break. Came back. And did the translation — the part where engineering earns its seat:

What would prove this is true? (What signals would we need to see?)

Where could we get those signals? (What data sources exist, even imperfect ones?)

What’s the smallest model that would be useful? (What gets us 60-80% there?)

The potential value dwarfed anything on the original list — because we weren’t optimizing an existing feature, we were changing the growth economics of the entire business.

That’s what I pitched to top management. It got funded. But the real outcome wasn’t the budget. That second list allowed me to split off part of the team as a dedicated ML team and position them not as a support function but as a value creation engine. Not “we help other teams make better decisions.” Instead: “we find revenue the business didn’t know it was leaving on the table.”

The first list would have kept us as a service desk with fancier tools. The second list changed what the data team was for.

Your feasibility filter is running on 2022 intuitions

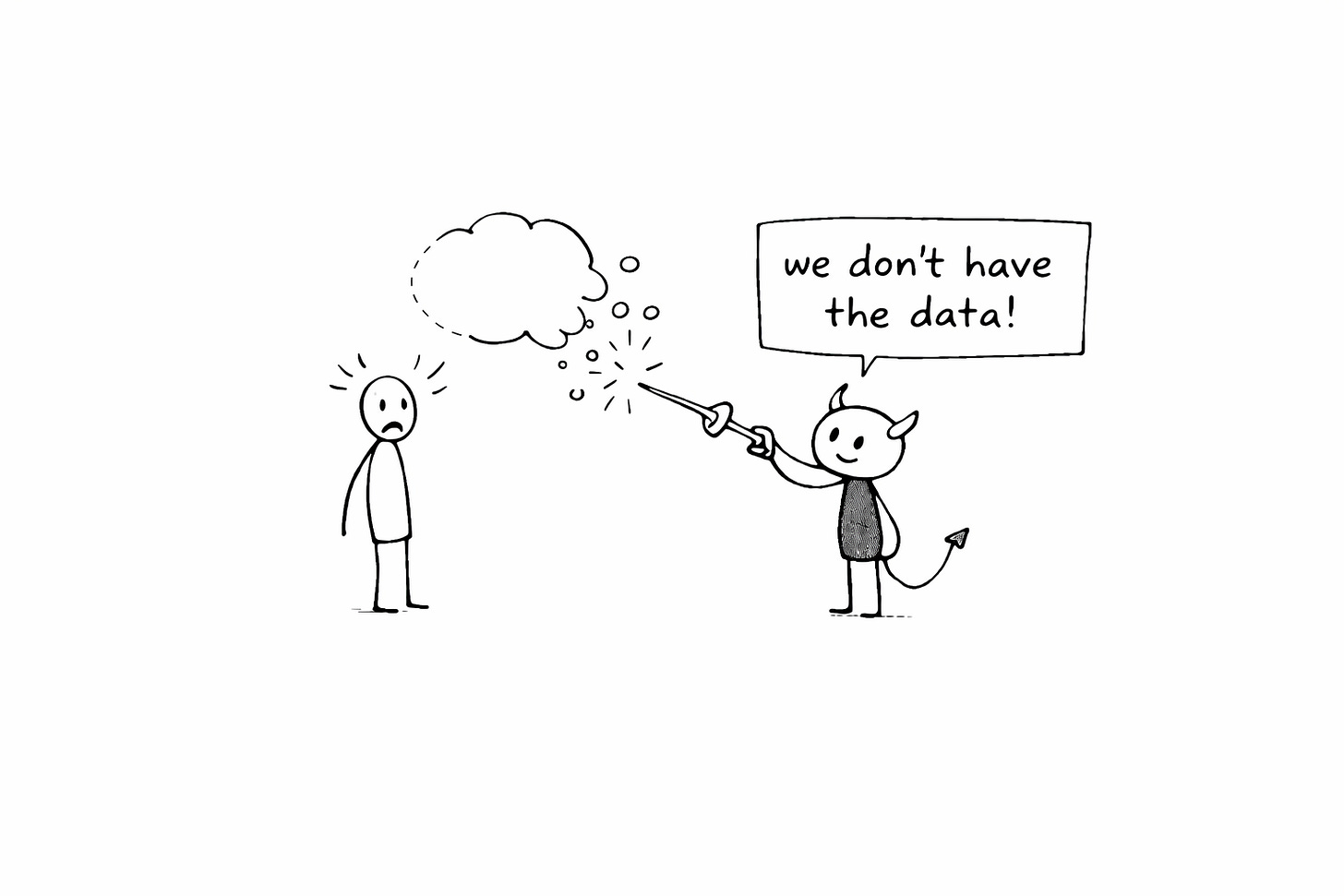

Most “AI brainstorming” is just feasibility triage in disguise.

Before an idea even fully forms, someone in the room — often you, inside your own head — asks “but do we have the data for that?” or “that sounds massive, let’s stay realistic.” The idea dies before anyone evaluates whether it’s actually valuable. You think you brainstormed all possibilities. You didn’t. You brainstormed the possibilities that survived the feasibility filter. That’s a much smaller, much more boring set.

Here’s why this matters more right now than it ever has: AI has moved the boundary of what’s possible so far that the ideas you rejected as “unrealistic” two years ago are buildable today. LLMs can extract structured information from unstructured sources at a scale that was genuinely impossible for a mid-sized data team in 2022. The space of “things we could know if we tried” has expanded by an order of magnitude.

But your feasibility filter hasn’t updated. You’re rejecting 2026 possibilities with 2022 intuitions about what’s buildable. You dismiss perfect-world thinking as fantasy, so you propose chatbots — because chatbots feel realistic.

The 10x idea lives in exactly the space you dismissed as impossible.

Taiichi Ohno, a machine shop manager at Toyota — not the CEO, not a strategist — didn’t ask “how do we speed up our assembly line?” He asked “what would a perfect supply system look like?” and invented just-in-time manufacturing. JIT only works if you have perfect information about all suppliers and ongoing processes. Toyota asked that question. And you should, too.

Five questions that tell you if your AI roadmap is strategy or a to-do list

Pull up the AI roadmap your team produced last quarter. Ask yourself:

Can I point at a single item and say “this will deliver 10x value to our customers”?

Would a competitor produce the same list in an afternoon?

Did we start from “where could we use AI?” or from “what would change everything if we knew it?”

Did anyone kill an idea because “we don’t have that data”?

Is there a single item on this list that made management do anything other than nod politely?

If none of these questions sting, you’re either exceptional — or your roadmap is lying to you.