Your AI Agent Isn't Broken. Your Definitions Are.

Someone on your analytics team kept "customer" meaning one thing everywhere. They just left.

If your AI agent can’t reliably operate on basic business principles, it’s probably not the model. It’s that your company has three definitions of the same metric — and humans have been quietly patching that over for years.

When Brian Chesky asked Airbnb’s data teams which city had the most bookings the previous week, Data Science and Finance gave different answers. Different tables, different metric definitions, different business logic. Both correct. Neither agreed. That’s an AI evaluation prompt, and your company would fail it right now. A human analyst muddles through — squints at both numbers, walks down the hall, asks someone. An AI agent picks whichever definition it encounters first and acts on it a thousand times before anyone notices.

AtScale made this concrete: an LLM against raw enterprise data without semantic grounding was wrong 80% of the time. With a layer telling it what each metric means — 92.5% accurate. The gap isn’t intelligence. It’s meaning.

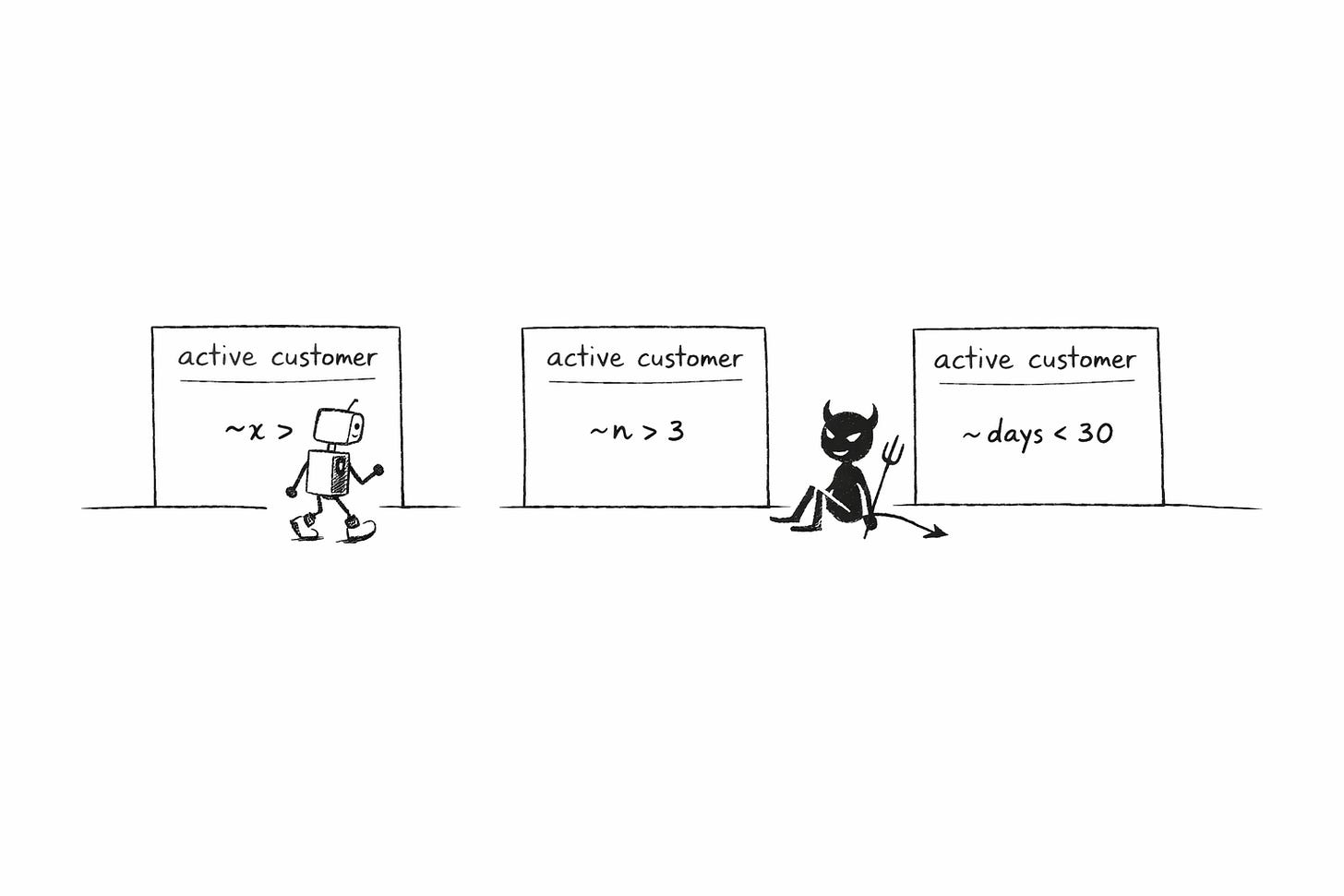

Here’s the rule: if a business concept, a metric, isn’t deterministic, owned, and encoded, your agent cannot be trusted. That’s the minimum bar. And almost nobody is meeting it.

A minimum viable semantic contract looks like this:

Metric/ concept name — e.g., “active customer”

Definition — plain English, no ambiguity

Formula / logic — deterministic, not LLM-inferred

Canonical source — one table, one query

Owner — person or team accountable

Change process — how updates happen, who approves

Drift test — alert when two systems disagree

If your company doesn’t have this for every metric an agent touches, you don’t have an AI reliability problem. You have a definitions problem.

AI agents can’t run on the ambiguity that analytics lived on

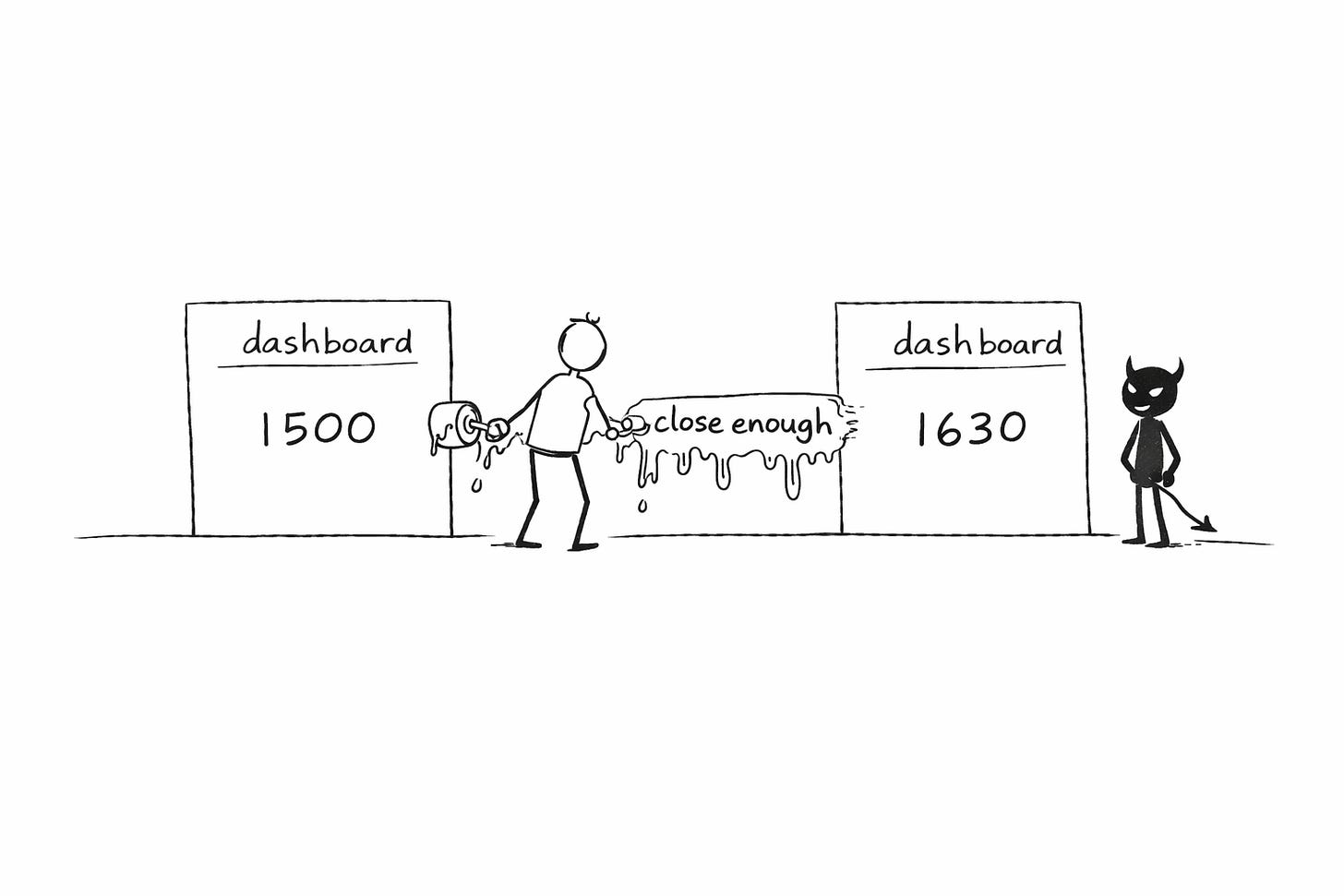

Analytics could tolerate fuzzy definitions. A dashboard with 90% uptime is fine. Two teams using slightly different churn calculations causes a confusing Monday meeting. The whole system ran on human judgment as the error-correction layer.

AI agents can’t tolerate any of that. They don’t walk down the hall. They pick one definition and act on it — in front of your customers, without supervision, at machine speed. The demand shifted from “can humans roughly agree on what churn means” to “can machines making thousands of decisions per second operate from deterministic shared definitions.” Analytics never had to meet that bar.

Snowflake’s Chief Data Analytics Officer, Anahita Tafvizi, named the dynamic: “The combination of great AI models and bad data governance brings chaos at scale. You previously had chaos; now you have scaled your chaos.” Her line about training is sharper:

“If you couldn’t train a human on the data set, how could you train AI on it?”

This is why 95% of enterprise AI pilots deliver zero measurable return. Not because the models are bad. Because the definitions underneath them aren’t frozen.

And yet that’s the legacy the dying data teams leave us with.

The invisible function that used to hold meaning together

So who was keeping the definitions roughly consistent before? That’s the part nobody tracked.

Every company has a person whose job title says “senior analyst” or “head of analytics” but whose actual function is walking into meetings and saying “that’s not what we mean by churn.” The questions that separate these people from queue workers aren’t about technical skill. They’re about who defines what should be measured, who generates hypotheses before touching data, who tells you you’re wrong. That’s not analytics. That’s organizational meaning-making.

Riley Newman was Airbnb’s version. Employee #10, first data scientist. His role, in his own words, was “thought leadership and teaching people how to think about things.” He made sure “booking” meant one thing everywhere. He put as much weight on communication as on technical rigor.

And even he broke at scale. That Chesky question — two teams, two answers, both correct — happened on his watch. Benn Stancil diagnosed why: “We have the tools for governing tables, and we have tools for governing dashboards, but we’re still pretty sloppy about governing the space between the two.” That space between is where meaning lives. And humans can’t hold it past a certain scale.

When Snowflake hired its first CDAO, she found the same problem internally. Active accounts had three definitions. Her fix was centralizing teams and creating a metrics council. She became the human semantic layer.

Now those humans are leaving. 108,000 tech jobs cut in January 2026. Salesforce named data analytics roles specifically. Roughly 80% of organizational processes remain undocumented. The knowledge walks out with the person. Nobody is encoding anything on the way out — at the exact moment AI is demanding something those people were never designed to provide.

Semantic contracts are the only analyst that scales to agent-speed decisions

Even Riley Newman couldn’t hold shared meaning at scale — so Airbnb spent four years building Minerva: 12,000 metrics, 4,000 dimensions, 200 data producers. Because the human function broke. Three of Newman’s colleagues founded Transform. dbt Labs acquired them. The function kept seeking a body that scales.

That body is the semantic contract. It doesn’t just encode what Riley did with judgment. It upgrades it to a standard Riley could never have met. Riley held shared meaning for humans in meetings. The semantic contract holds it for AI agents — deterministic, always-on, machine-readable.

dbt Labs made this explicit when they open-sourced MetricFlow: “Metrics should not be probabilistic or depend on an LLM guessing each calculation. They should be deterministic.” In the old world, a smart human could hold “roughly consistent” definitions across a few teams. In the new world, “roughly consistent” gets you agents acting on wrong definitions at machine speed. Deterministic or nothing.

The industry converged on this in 2025–26. Snowflake, Databricks, and dbt Labs all shipped native semantic layers within a year. In September 2025, 17 companies — including Snowflake, Salesforce, BlackRock, ThoughtSpot, and Mistral AI — formed the Open Semantic Interchange initiative. VentureBeat called it solving “AI’s most fundamental bottleneck.” Fierce competitors publicly agreeing that fragmented definitions are the largest barrier to AI adoption. That happens because the problem is structural and everyone is hitting the same wall.

Questions to answer this week

If you’re shipping an agent on top of company data, stop and answer these before anything else:

What are the 10 metrics my agent is allowed to act on? If you can’t list them, your agent is freelancing on definitions nobody agreed to.

For each metric: where is the one canonical definition, and who owns it? If the answer is “it’s in a Confluence page somewhere” or “Sarah knows,” you don’t have a definition. You have folklore.

If two dashboards disagree today, which one is wrong — and how would you know automatically? If the answer is “we’d notice eventually,” your agent won’t notice at all.

Is the agent allowed to execute actions when the metric definition is missing or ambiguous? It shouldn’t be. Build the guardrail now.

What’s your drift alarm? Tests and monitoring that fire when two systems report different values for the same contract metric. If you don’t have one, you won’t know your agent is wrong until a customer tells you.

Your best analyst was never writing SQL. They were freezing meaning. Either encode what they did into infrastructure, or watch your agents scale chaos.

Fantastic read, and I couldn't agree more - there's a collective documentation & standardization debt that most data teams have to pay before being able to truly trust AI agents. The MVP semantic contract is spot on, but the relationships between metrics are a critical piece of context too IMO (even when they aren't mathematical or quantifiable).